“With remarkable ease, we form and sustain false beliefs. Led by our preconceptions, overconfident, persuaded by vivid anecdotes, perceiving correlations and control even where none may exist, we construct our social beliefs and then influence others to confirm them …The most consistent finding of psychology is that human thought and behavior are predictably irrational. No human, regardless of that human's intelligence, consistently forms accurate beliefs. Many people like to believe that they objectively and critically examine the information that they gain from experience, using it to form true beliefs. Without knowing it, though, humans usually form their beliefs for social reasons and actively deny the possibility of being wrong. The human mind is not optimized to discover and believe truth. Instead, it tends to delude itself.

[I]f anything, laboratory procedures overestimate our intuitive powers. The experiments usually present people with clear evidence and warn them that their reasoning ability is being tested. Seldom does real life say to us: ‘Here is some evidence. Now put on your intellectual Sunday best and answer these questions.’ … to cope with reality, we simplify it.” [1]

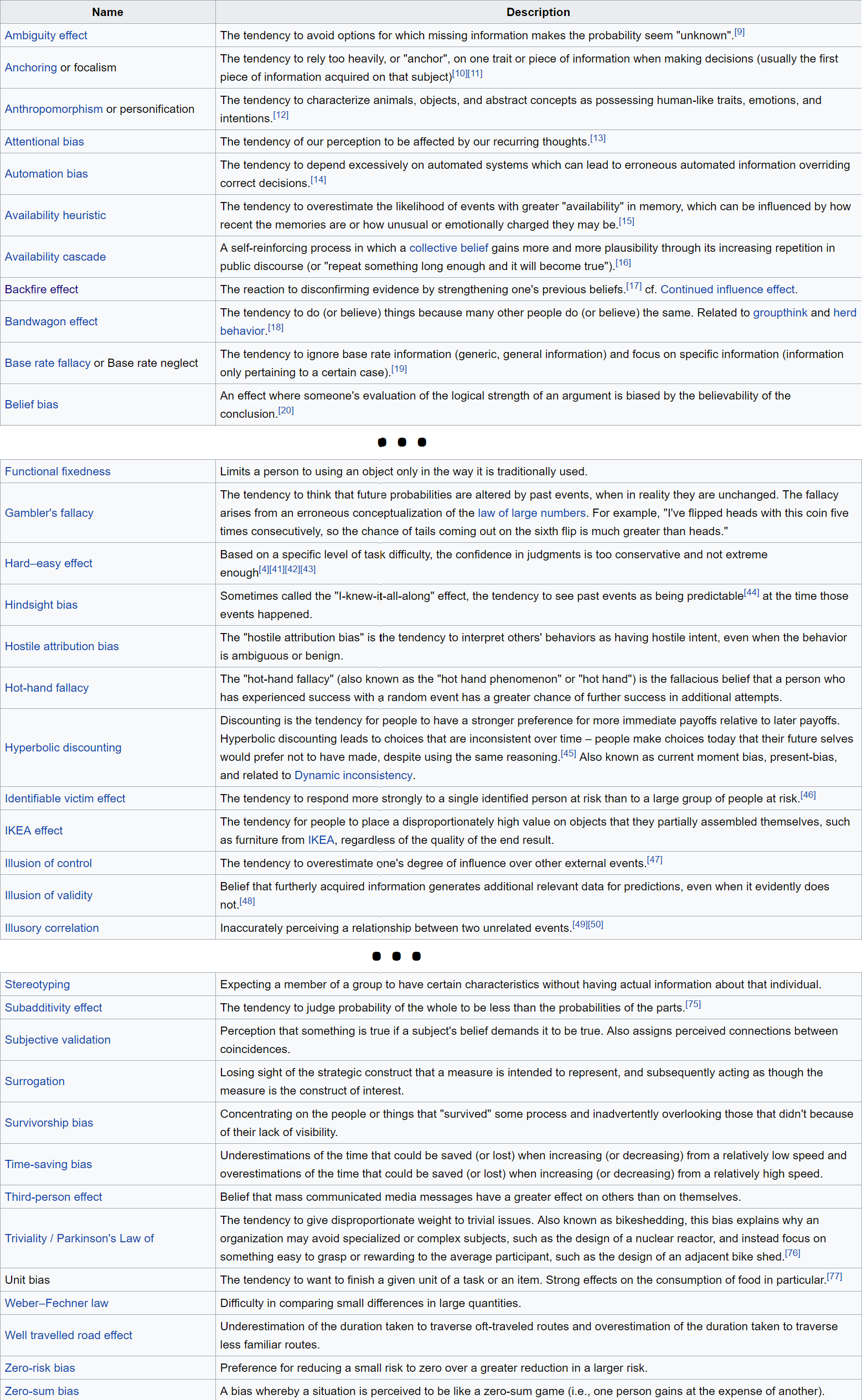

Common mistakes in reasoning due to human psychology are called cognitive biases, which are formally defined as "the systematic patterns of deviation from norm or rationality in judgment, whereby inferences about other people and situations may be drawn in an illogical fashion." The biggest problems with cognitive biases are how difficult they all are to overcome, how pervasively they affect everyone's reasoning, and how each person tends to ignore their influence on her reasoning.

Below, I give an overview of cognitive biases and review research on some of the most important ones which mislead everyone into self-delusion. All of these should reduce your confidence in your own beliefs, and lead you to consider the possibility that many of them are unjustified or wrong. [2]

1. Hundreds of cognitive biases distort everyone's thinking processes, making everyone think inaccurately and unreliably.

2. Many overconfidence biases cause everyone to overestimate the probability that they are right and underestimate the probability that they are wrong.

3. Confirmation bias makes everyone seek out evidence that they are right, and ignore evidence that they are wrong.

4. The binary bias makes everyone force many of their perceptions into known categories, and either distort or ignore perceptions which do not fit those categories.